Symptoms & Diagnosis

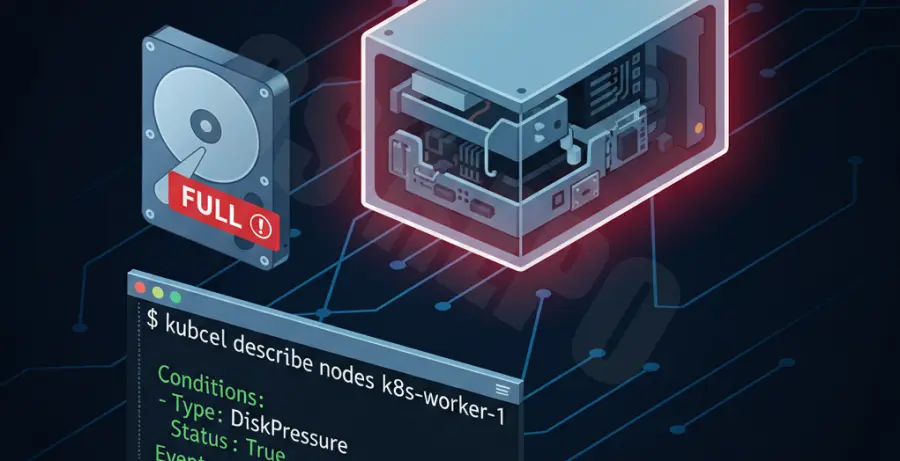

In Kubernetes, disk pressure occurs when a node’s root filesystem or image filesystem exceeds a specific threshold. When the kubelet detects this, it transitions the node into a DiskPressure state and begins evicting pods to reclaim space.

The most common symptom is pods moving into an Evicted status. You can verify this by running the following command:

kubectl get pods -A | grep EvictedTo confirm the root cause, describe the evicted pod. You will see a message similar to “Pod was evicted to free up resources” followed by “DiskPressure”.

kubectl describe pod [POD_NAME]You should also check the status of the node itself to see if the DiskPressure condition is active:

kubectl describe node [NODE_NAME]

Troubleshooting Guide

Once you have confirmed the eviction is due to disk pressure, you must identify what is consuming the space on the worker node. SSH into the affected node and check the disk usage.

df -hTypically, disk pressure is caused by one of three things: bloated container logs, unused container images, or large emptyDir volumes. Use the following table to identify the likely culprit:

| Component | Default Path | Likely Cause |

|---|---|---|

| Container Logs | /var/log/pods | Applications writing excessive stdout/stderr. |

| Container Runtime | /var/lib/docker or /var/lib/containerd | Old, unused images or stopped containers. |

| Kubelet Data | /var/lib/kubelet | Large emptyDir volumes used by workloads. |

1. Clean Up Unused Images

If the image filesystem is full, you can manually trigger a cleanup of unused images. For nodes using containerd, use crictl:

sudo crictl rmi --prune2. Clear Container Logs

If logs are the issue, you can temporarily clear them to bring the node back to a “Ready” state. Note that this is a stop-gap measure.

sudo find /var/log/pods/ -type f -name "*.log" -deletePrevention

Fixing an eviction is reactive. To prevent disk pressure from recurring, you must implement resource limits and proper maintenance configurations.

Set Ephemeral Storage Limits

Define requests and limits for ephemeral-storage in your pod manifests. This ensures that a single pod cannot consume the entire node disk.

resources:

requests:

ephemeral-storage: "500Mi"

limits:

ephemeral-storage: "1Gi"Configure Kubelet Garbage Collection

You can tune the kubelet configuration to be more aggressive with image garbage collection. Adjust the imageGCHighThresholdPercent and imageGCLowThresholdPercent flags.

# Example kubelet config snippet

imageGCHighThresholdPercent: 80

imageGCLowThresholdPercent: 70Implement Log Rotation

Ensure your container runtime is configured for log rotation. If you use Docker, set the log-opts in daemon.json to limit the size and number of log files. For containerd, ensure your logging agent (like Fluentd or Logstash) is shipping logs and rotating files correctly.

Finally, set up monitoring alerts using Prometheus and Alertmanager to notify your team when node disk usage exceeds 70%, allowing you to intervene before evictions occur.